Ray Caster#

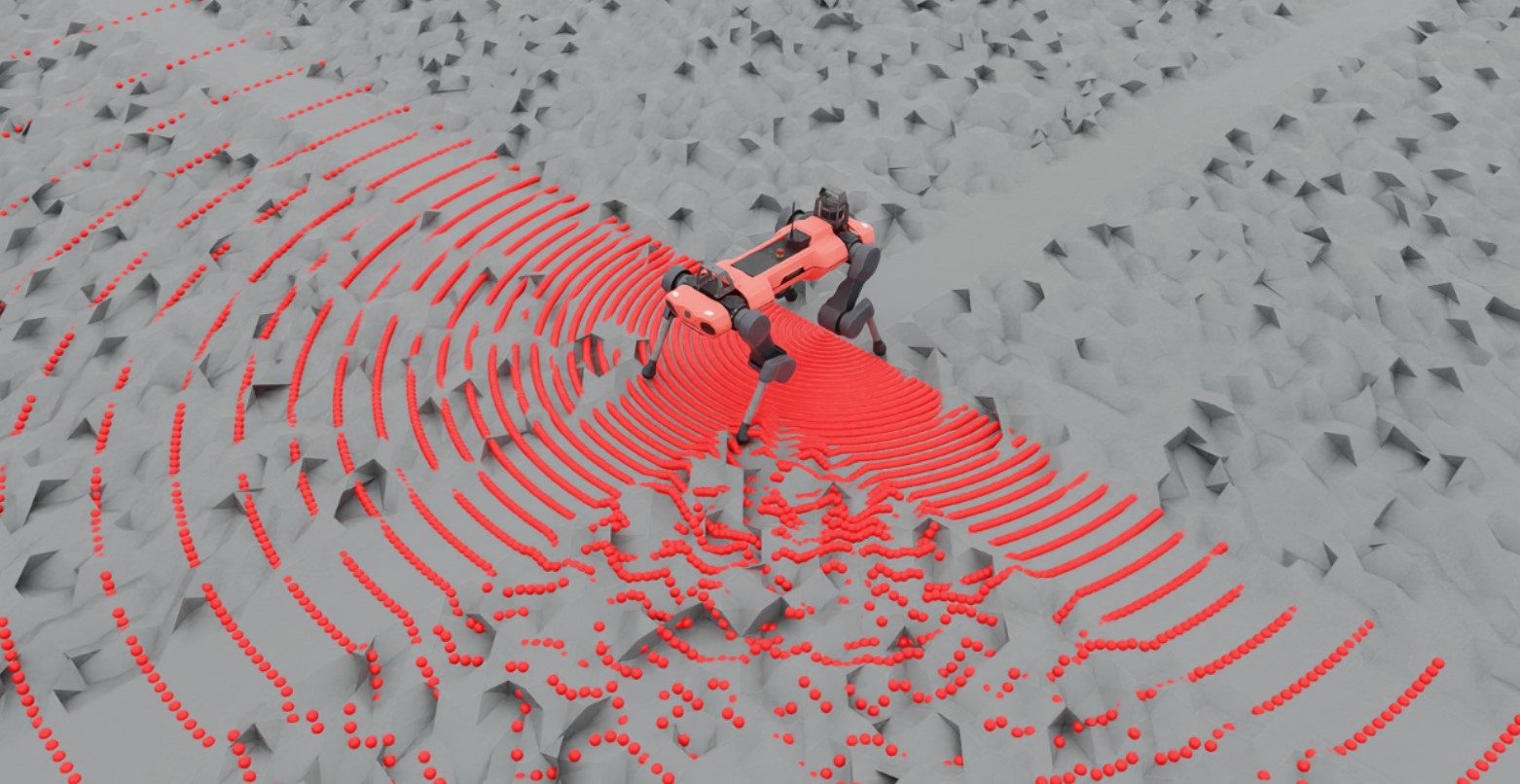

The Ray Caster sensor (and the ray caster camera) are similar to RTX based rendering in that they both involve casting rays. The difference here is that the rays cast by the Ray Caster sensor return strictly collision information along the cast, and the direction of each individual ray can be specified. They do not bounce, nor are they affected by things like materials or opacity. For each ray specified by the sensor, a line is traced along the path of the ray and the location of first collision with the specified mesh is returned. This is the method used by some of our quadruped examples to measure the local height field.

To keep the sensor performant when there are many cloned environments, the line tracing is done directly in Warp. This is the reason why specific meshes need to be identified to cast against: that mesh data is loaded onto the device by warp when the sensor is initialized. As a consequence, the current iteration of this sensor only works for literally static meshes (meshes that are not changed from the defaults specified in their USD file). This constraint will be removed in future releases.

Using a ray caster sensor requires a pattern and a parent xform to be attached to. The pattern defines how the rays are cast, while the prim properties defines the orientation and position of the sensor (additional offsets can be specified for more exact placement). Isaac Lab supports a number of ray casting pattern configurations, including a generic LIDAR and grid pattern.

@configclass

class RaycasterSensorSceneCfg(InteractiveSceneCfg):

"""Design the scene with sensors on the robot."""

# ground plane

ground = AssetBaseCfg(

prim_path="/World/Ground",

spawn=sim_utils.UsdFileCfg(

usd_path=f"{ISAAC_NUCLEUS_DIR}/Environments/Terrains/rough_plane.usd",

scale=(1, 1, 1),

),

)

# lights

dome_light = AssetBaseCfg(

prim_path="/World/Light", spawn=sim_utils.DomeLightCfg(intensity=3000.0, color=(0.75, 0.75, 0.75))

)

# robot

robot = ANYMAL_C_CFG.replace(prim_path="{ENV_REGEX_NS}/Robot")

ray_caster = RayCasterCfg(

prim_path="{ENV_REGEX_NS}/Robot/base",

update_period=1 / 60,

offset=RayCasterCfg.OffsetCfg(pos=(0, 0, 0.5)),

mesh_prim_paths=["/World/Ground"],

ray_alignment="yaw",

pattern_cfg=patterns.LidarPatternCfg(

channels=100, vertical_fov_range=[-90, 90], horizontal_fov_range=[-90, 90], horizontal_res=1.0

),

Notice that the units on the pattern config is in degrees! Also, we enable visualization here to explicitly show the pattern in the rendering, but this is not required and should be disabled for performance tuning.

Querying the sensor for data can be done at simulation run time like any other sensor.

def run_simulator(sim: sim_utils.SimulationContext, scene: InteractiveScene):

.

.

.

# Simulate physics

while simulation_app.is_running():

.

.

.

# print information from the sensors

print("-------------------------------")

print(scene["ray_caster"])

print("Ray cast hit results: ", scene["ray_caster"].data.ray_hits_w)

-------------------------------

Ray-caster @ '/World/envs/env_.*/Robot/base/lidar_cage':

view type : <class 'isaacsim.core.experimental.prims.xform_prim.XformPrim'>

update period (s) : 0.016666666666666666

number of meshes : 1

number of sensors : 1

number of rays/sensor: 18000

total number of rays : 18000

Ray cast hit results: tensor([[[-0.3698, 0.0357, 0.0000],

[-0.3698, 0.0357, 0.0000],

[-0.3698, 0.0357, 0.0000],

...,

[ inf, inf, inf],

[ inf, inf, inf],

[ inf, inf, inf]]], device='cuda:0')

-------------------------------

Here we can see the data returned by the sensor itself. Notice first that there are 3 closed brackets at the beginning and the end: this is because the data returned is batched by the number of sensors. The ray cast pattern itself has also been flattened, and so the dimensions of the array are [N, B, 3] where N is the number of sensors, B is the number of cast rays in the pattern, and 3 is the dimension of the casting space. Finally, notice that the first several values in this casting pattern are the same: this is because the lidar pattern is spherical and we have specified our FOV to be hemispherical, which includes the poles. In this configuration, the “flattening pattern” becomes apparent: the first 180 entries will be the same because it’s the bottom pole of this hemisphere, and there will be 180 of them because our horizontal FOV is 180 degrees with a resolution of 1 degree.

You can use this script to experiment with pattern configurations and build an intuition about how the data is stored by altering the triggered variable on line 81.

Code for raycaster_sensor.py

1# Copyright (c) 2022-2026, The Isaac Lab Project Developers (https://github.com/isaac-sim/IsaacLab/blob/main/CONTRIBUTORS.md).

2# All rights reserved.

3#

4# SPDX-License-Identifier: BSD-3-Clause

5

6import argparse

7

8from isaaclab.app import AppLauncher

9

10# add argparse arguments

11parser = argparse.ArgumentParser(description="Example on using the raycaster sensor.")

12parser.add_argument("--num_envs", type=int, default=1, help="Number of environments to spawn.")

13# append AppLauncher cli args

14AppLauncher.add_app_launcher_args(parser)

15# demos should open Kit visualizer by default

16parser.set_defaults(visualizer=["kit"])

17# parse the arguments

18args_cli = parser.parse_args()

19

20# launch omniverse app

21app_launcher = AppLauncher(args_cli)

22simulation_app = app_launcher.app

23

24"""Rest everything follows."""

25

26import numpy as np

27import torch

28

29import isaaclab.sim as sim_utils

30from isaaclab.assets import AssetBaseCfg

31from isaaclab.scene import InteractiveScene, InteractiveSceneCfg

32from isaaclab.sensors.ray_caster import RayCasterCfg, patterns

33from isaaclab.utils.assets import ISAAC_NUCLEUS_DIR

34from isaaclab.utils.configclass import configclass

35

36##

37# Pre-defined configs

38##

39from isaaclab_assets.robots.anymal import ANYMAL_C_CFG # isort: skip

40

41

42@configclass

43class RaycasterSensorSceneCfg(InteractiveSceneCfg):

44 """Design the scene with sensors on the robot."""

45

46 # ground plane

47 ground = AssetBaseCfg(

48 prim_path="/World/Ground",

49 spawn=sim_utils.UsdFileCfg(

50 usd_path=f"{ISAAC_NUCLEUS_DIR}/Environments/Terrains/rough_plane.usd",

51 scale=(1, 1, 1),

52 ),

53 )

54

55 # lights

56 dome_light = AssetBaseCfg(

57 prim_path="/World/Light", spawn=sim_utils.DomeLightCfg(intensity=3000.0, color=(0.75, 0.75, 0.75))

58 )

59

60 # robot

61 robot = ANYMAL_C_CFG.replace(prim_path="{ENV_REGEX_NS}/Robot")

62

63 ray_caster = RayCasterCfg(

64 prim_path="{ENV_REGEX_NS}/Robot/base",

65 update_period=1 / 60,

66 offset=RayCasterCfg.OffsetCfg(pos=(0, 0, 0.5)),

67 mesh_prim_paths=["/World/Ground"],

68 ray_alignment="yaw",

69 pattern_cfg=patterns.LidarPatternCfg(

70 channels=100, vertical_fov_range=[-90, 90], horizontal_fov_range=[-90, 90], horizontal_res=1.0

71 ),

72 debug_vis=not args_cli.headless,

73 )

74

75

76def run_simulator(sim: sim_utils.SimulationContext, scene: InteractiveScene):

77 """Run the simulator."""

78 # Define simulation stepping

79 sim_dt = sim.get_physics_dt()

80 sim_time = 0.0

81 count = 0

82

83 triggered = True

84 countdown = 42

85

86 # Simulate physics

87 while simulation_app.is_running():

88 if count % 500 == 0:

89 # reset counter

90 count = 0

91 # reset the scene entities

92 # root state

93 # we offset the root state by the origin since the states are written in simulation world frame

94 # if this is not done, then the robots will be spawned at the (0, 0, 0) of the simulation world

95 root_pose = scene["robot"].data.default_root_pose.torch.clone()

96 root_pose[:, :3] += scene.env_origins

97 scene["robot"].write_root_pose_to_sim_index(root_pose=root_pose)

98 root_vel = scene["robot"].data.default_root_vel.torch.clone()

99 scene["robot"].write_root_velocity_to_sim_index(root_velocity=root_vel)

100 # set joint positions with some noise

101 joint_pos, joint_vel = (

102 scene["robot"].data.default_joint_pos.torch.clone(),

103 scene["robot"].data.default_joint_vel.torch.clone(),

104 )

105 joint_pos += torch.rand_like(joint_pos) * 0.1

106 scene["robot"].write_joint_position_to_sim_index(position=joint_pos)

107 scene["robot"].write_joint_velocity_to_sim_index(velocity=joint_vel)

108 # clear internal buffers

109 scene.reset()

110 print("[INFO]: Resetting robot state...")

111 # Apply default actions to the robot

112 # -- generate actions/commands

113 targets = scene["robot"].data.default_joint_pos.torch

114 # -- apply action to the robot

115 scene["robot"].set_joint_position_target_index(target=targets)

116 # -- write data to sim

117 scene.write_data_to_sim()

118 # perform step

119 sim.step()

120 # update sim-time

121 sim_time += sim_dt

122 count += 1

123 # update buffers

124 scene.update(sim_dt)

125

126 # print information from the sensors

127 print("-------------------------------")

128 print(scene["ray_caster"])

129 print("Ray cast hit results: ", scene["ray_caster"].data.ray_hits_w.torch)

130

131 if not triggered:

132 if countdown > 0:

133 countdown -= 1

134 continue

135 data = scene["ray_caster"].data.ray_hits_w.torch.cpu().numpy()

136 np.save("cast_data.npy", data)

137 triggered = True

138 else:

139 continue

140

141

142def main():

143 """Main function."""

144

145 # Initialize the simulation context

146 sim_cfg = sim_utils.SimulationCfg(dt=0.005, device=args_cli.device)

147 sim = sim_utils.SimulationContext(sim_cfg)

148 # Set main camera

149 sim.set_camera_view(eye=[3.5, 3.5, 3.5], target=[0.0, 0.0, 0.0])

150 # design scene

151 scene_cfg = RaycasterSensorSceneCfg(num_envs=args_cli.num_envs, env_spacing=2.0)

152 scene = InteractiveScene(scene_cfg)

153 # Play the simulator

154 sim.reset()

155 # Now we are ready!

156 print("[INFO]: Setup complete...")

157 # Run the simulator

158 run_simulator(sim, scene)

159

160

161if __name__ == "__main__":

162 # run the main function

163 main()

164 # close sim app

165 simulation_app.close()